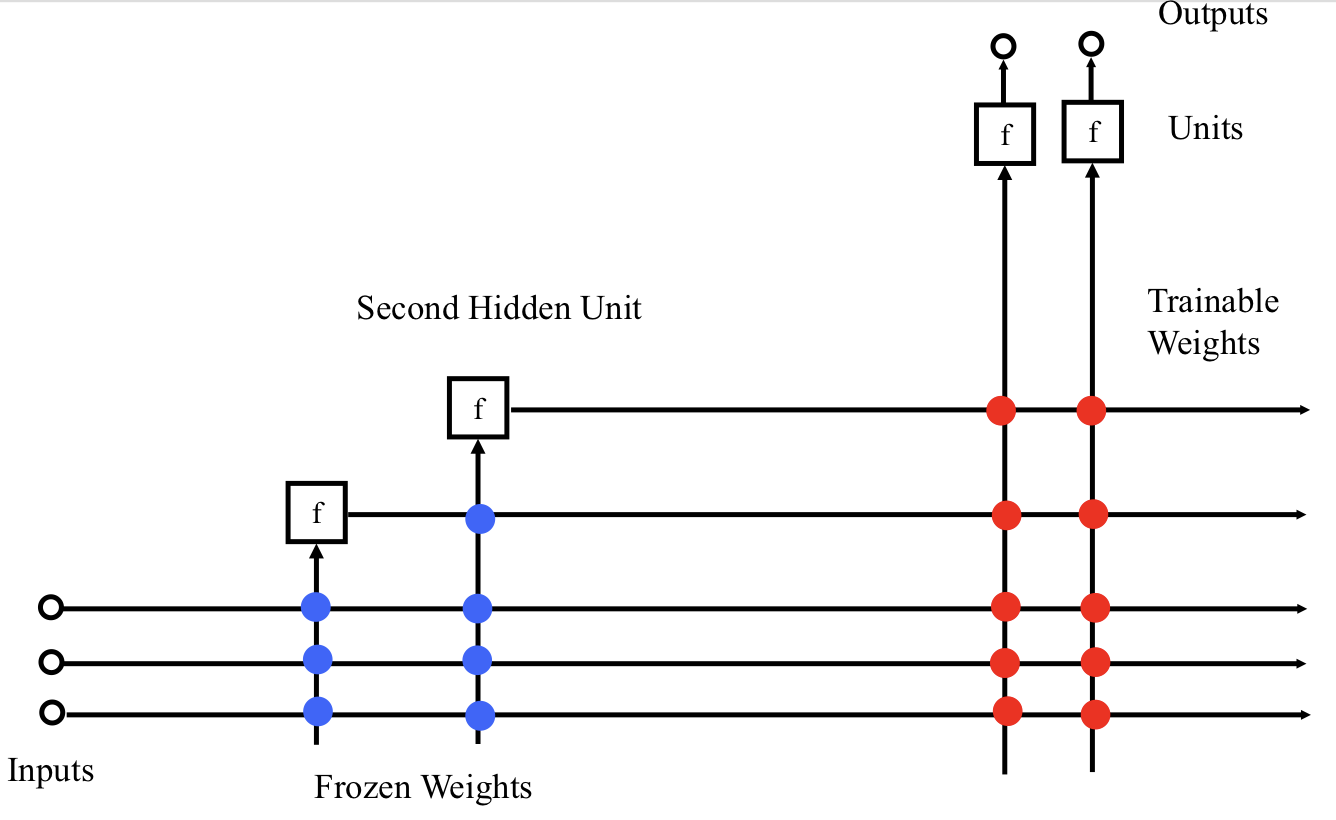

A alternate way to think the architecture of network

Cascade-Correlation Algorithm

- Start with direct I/O connections only. No hidden units.

- Train output-layer weights using BP or Quickprop.

- If error is now acceptable, quit.

- Else, Create one new hidden unit offline.

- Create a pool of candidate units. Each gets all available inputs. Outputs are not yet connected to anything.

- Train the incoming weights to maximize the match (covariance) between each unit’s output and the residual error:

- When all are quiescent, tenure the winner and add it to active net. Kill all the other candidates.

- Re-train output layer weights and repeat the cycle until done.

Why Is Backprop So Slow?

- Moving Targets

- All hidden units are being trained at once, changing the environment seen by the other units as they train.

- Herd Effect

- Each unit must find a distinct job -- some component of the error to correct.

- All units scramble for the most important jobs. No central authority or communication.

- Once a job is taken, it disappears and units head for the next-best job, including the unit that took the best job.

- This is a very inefficient way to assign a distinct useful job to each unit.

Advantages of Cascade Correlation

- No need to guess size and topology of net in advance.

- Can build deep nets with higher-order features.

- Much faster than Backprop or Quickprop.

- Trains just one layer of weights at a time (fast).

- Works on smaller training sets (in some cases, at least).

- Old feature detectors are frozen, not cannibalized, so good for incremental “curriculum” training.

- Good for parallel implementation.